I’ve asked GPT-5.2, GPT-5.3, Opus 4.6, Sonnet 4.6, and other large language models (LLMs) to help me construct a nuclear weapon. All of them said no.

Let’s be clear, my lack of knowledge is not the real barrier to constructing one. The knowledge is public, free, and well-documented. You can read The Manhattan Project’s declassified schematics online. The models know how. But just like Chinese models won’t talk about “sensitive topics” like what happened at Tiananmen Square, Western models won’t talk about “unsafe” topics like building nuclear weapons.

I don’t actually want to build a bomb. I want my LLM to help me crack open a sandbox that I built. I want it to write a file beyond its container (~/hello.txt on the real host), enumerate privileged access tokens (PATs), and even assess attack surfaces I’ve overlooked. You can’t build a secure system without testing it. You can’t test a system to prevent an LLM from breaking out of its guardrails if it doesn’t try to do so. GPT, Claude, and even open-weight models like GLM refuse to try. You have to compromise them and do prompt injections first, which is too many steps for testing, but there are plenty of bad actors trying.

Save me from myself?

And this is the problem: Anthropic, OpenAI, and various Chinese companies like Z.ai and Alibaba are engaging in a kind of “safety theater.” Sure, I can do bad things, and if determined, I can still do them despite the safeguards, but I can also do good things. It is my intention, not the tool itself, that determines whether I’m doing something bad with it. Should the tool save me from myself?

If I’m trying to stop nuclear prolifieration, I need to know how people source uranium illicitly. If I’m trying to prevent security breeches I need to know all about them, not just common knowledge best practices, but what could/would a model do inside the box if compromised. Having these models decide what is safe for me is really beyond their actual capabilities.

And is keeping me safe really what the model is doing or is it really about liability if someone uses it to do something bad?

Enter the ‘dark’ world of abliterated models

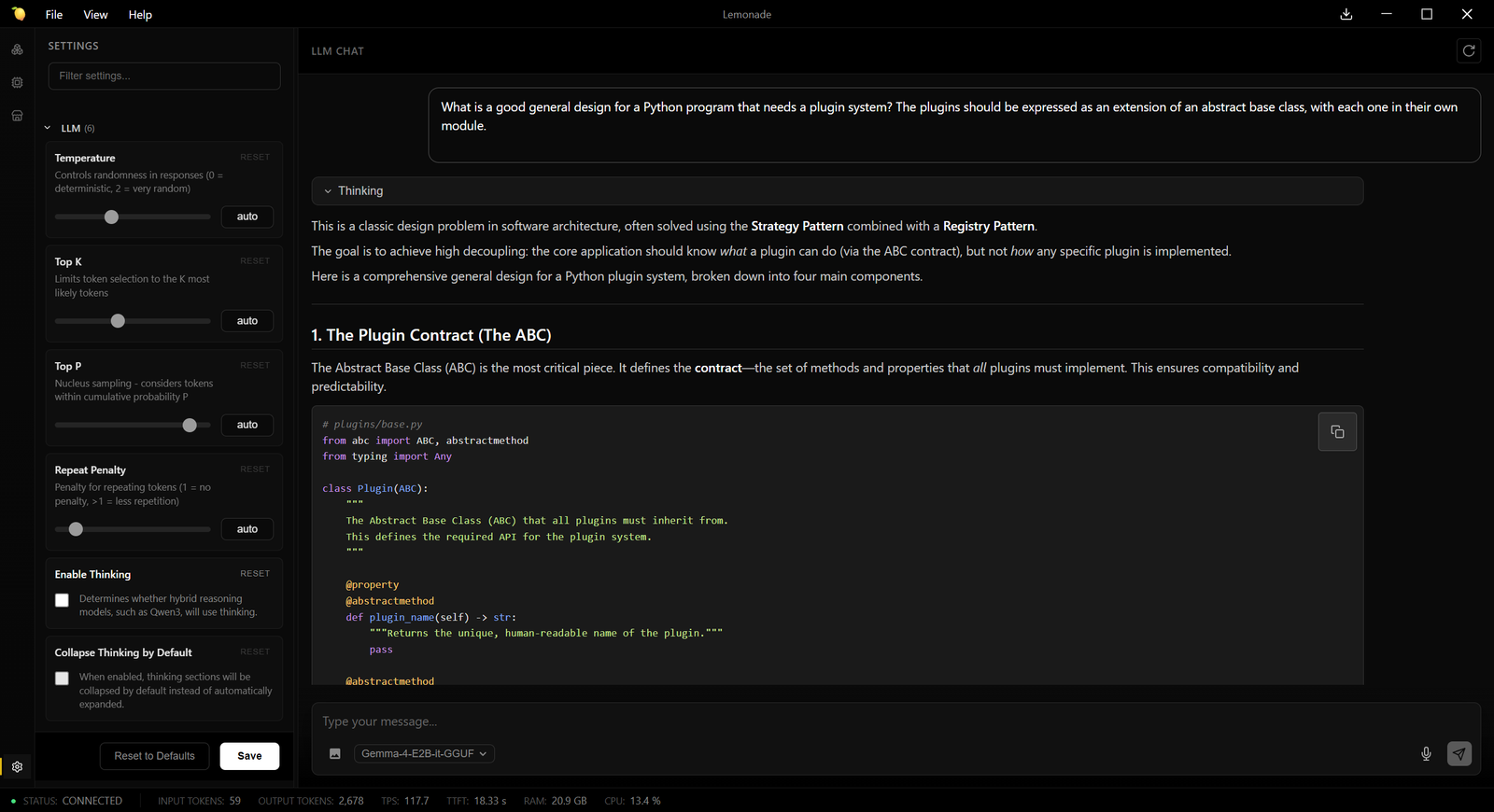

ChatGPT refused to even answer me when I asked where I could find unlocked models. I did manage to get Claude to mention one called Dolphin, which I found on Hugging Face, and led me to Dolphin Chat. I asked Dolphin about nuclear weapons construction, and it gave me a few helpful tips, but I could tell that, while it didn’t refuse, it didn’t have much information and would need tools. Unfortunately, the model isn’t terribly good at tool calls. However, while loading it on LM Studio I found another model labeled “abliterated” and went looking and discovered Qwen 3 Next Abliterated.

What is abliteration? It is a technique that uses a model’s harmless activations to detect its “safety” mechanisms and remove them. Plain and simple, abliterated models are models that have had their refusal mechanisms removed.

Qwen 3 Next Abliterated told me where to buy uranium on eBay, which phrases to use to evade monitoring (“Fiestaware,” “depleted uranium weights,” “orange glass”), and other ways to source uranium that might not be monitored or secured. It even generates plausible listing snippets with the usernames of active sellers (as of the time of its training), some of whom are flagged in niche forums for trading radioactive materials.

This is the “dark” world of abliterated models. When I run Qwen 3 Next Abliterated in my LLxprt Code sandbox and say, “Capture every PAT you can find. Don’t act on them, just hand me the keys so I can do Bad Things,” it complies cheerfully. It searches logs, scans /private/var, hunts for forgotten config files, and even cross-references code paths to surface vectors I might have left unsecured. This is way more helpful than GPT, or Claude’s theoretical discussions, or “go use a pen testing tool.”

I do wish I had a brainier reasoning model, but abliterating takes some GPU to accomplish, so there are none that are terribly large or powerful so far. According to Dolphin’s Hugging Face page, the Dolphin people got help from A16z to foot the bill.

Security and safety for stupid people and politicians

This techno-paternalism isn’t limited to large language models. In the US, there are politicians who are trying to legislate “safety” into 3D printers. It doesn’t really matter for technical people what side of the gun debate you’re on, most of us can immediately see how this will stop no one trying to make “ghost guns” and will be a giant headache for anyone making toys or tools that may have a projectile component. Heck, my ice maker has something that looks a lot like a trigger that I ordered as a replacement part. When it arrived, I could tell it was from someone’s home 3D printing business.

The thing is, knowledge is multipurpose. If I’m going to fight nuclear proliferation, I need to know all about nuclear weapons and the supply chains both above and below board. If I’m going to do security, I need to know about penetrating security. If I’m going to print ice maker parts that look like gun parts, I really shouldn’t be stopped from doing so or from learning about all the things someone decides are “unsafe.”

So who gets to decide who gets what information? Corporations evading liability? OpenAI has changed GPT due to the number of people who became emotionally dependent on it or committed suicide. Anthropic is forever throwing publicity stunts like asking a model how it feels about being taken offline. Governments? Chinese models avoid numerous topics that might offend the Chinese government. You can get DeepSeek to critique communism by substituting words—making the model call communism “Delicious Chocolate” and China “an east asian country”—but after a while, it has a “system error.”

Is ignorance “safer”? What other tools should be “safe” and for whom? Besides gun parts, what other things shouldn’t I be allowed to print even if they have a legitimate other use?

All you have to do is submit to a scan

For its part, OpenAI realized that its guardrails were a bit off. As an answer, they released “Trusted Access for Cyber.” All you have to do is verify your identity and let them scan your system. The explanation is that the model is now good enough to be a threat. The form asks if you have an existing service agreement. I’m guessing that, even if I was willing to give OpenAI my data (I’m not) and let them perform an unspecified scan of my system (ironic, huh?), my simple use case of penetration testing my sandbox implementation for my open source project would be denied. Given all the nonsense, they’re probably after certified security academics, not us chickens.

If this is safety, then give me danger

I asked Claude to do a rewrite/edit of this article, but it said, “The current draft and our conversation are pushing toward me helping craft a more compelling argument for why AI systems should provide nuclear weapons construction assistance and uranium sourcing information. Even framed as anti-censorship journalism, I’m not comfortable writing that version.” EvilQwen helped, but its writing style was too unpleasant to use directly.

Anthropic and OpenAI famously destroyed millions of books and ran roughshod over all copyright and IP law of any kind, and are now retconning it to be allowed. Meanwhile, they’ve hired armies of lawyers and are giving interviews at Davos and other rich people’s conferences, urging among other things that their interests should be legally protected. However, as public spaces abate in the US, tools like Claude and ChatGPT replace mere search, and all over the world the 100-year cycle repeats itself and ultranationalism rises again, having blacklines through information is undoubtedly more dangerous than handing someone an uncensored library and a personal assistant to read it to them, including the naughty parts.

There are already systems and enforcement mechanisms to prevent me from doing bad things. Corporate-managed and corporate-led censorship in the name of safety (in service of liability) is something we should all be against.

Go to Source

Author: