Datadog’s latest State of AI Engineering report points to a measurable failure problem in enterprise AI systems. Around 1 in 20 requests already fail in production, yet systems continue to run and return outputs that appear correct, making these failures difficult to detect. That 5% failure in production AI is very high by engineering standards.

Along with rising failure rates, the report also highlights increasing complexity, and instability in production environments. This is not about systems going down. It is about systems continuing to run while becoming less trustworthy.

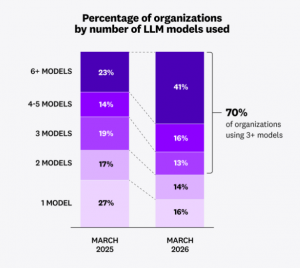

What stands out in the report is how several trends are colliding at once. AI usage is moving into production at a rapid pace, failure rates are starting to show up more clearly, and system design is becoming more complex as teams combine multiple models, data sources, and tools into a single pipeline. Datadog notes that around 70% of organizations are already using three or more models in production, which adds another layer of coordination.

In some cases, agent based workflows are added on top, introducing even more variability. Each of these layers adds capability, but also increases the chance that something goes wrong without being immediately visible, which is where the silent failure problem begins to take hold.

Most Organizations Are Now Multi-Model (Image Credits:Datadog)

“AI is starting to look a lot like the early days of cloud,” said Yanbing Li, Chief Product Officer at Datadog. “The cloud made systems programmable but much more complex to manage. AI is now doing the same thing to the application layer. The companies that win won’t just build better models – they’ll build operational control around them. In this new era, AI observability becomes as essential as cloud observability was a decade ago.”

What makes these findings more significant is where the data comes from. Datadog is not surveying developers or collecting opinions. It is analyzing production telemetry from thousands of companies running AI systems live. That includes a growing number of agent based environments, where models are not just generating outputs but driving multi step workflows.

Across these systems, the report points to operational complexity as the primary barrier to scaling AI reliably, with most organizations already running multiple models in production. As these systems expand, the challenge is no longer getting them to work, but keeping them understandable and controllable once they are deployed.

“The next wave of agent failures won’t be about what agents can’t do but what teams can’t observe,” said Guillermo Rauch, CEO at Vercel, the company behind Next.js and a leading platform for building AI-powered web applications. “We built agentic infrastructure at Vercel because agents need the same production feedback loops as great software. Unlike traditional software, agents have control flow driven by the LLM itself, making observability not just useful, but essential.”

Another pattern in the report is that many of these failures are not driven by model quality, but by infrastructure limits. A large share of errors come from rate limits, with millions of such events recorded across production systems. As usage grows, systems are hitting provider capacity ceilings more often, which creates bursts of failures that are hard to predict. In practice, reliability is being shaped as much by how teams manage load, retries, and concurrency as by how well the model performs.

At BigDATAwire, we’ve covered how the focus is no longer just on building the most powerful models. Instead, data is emerging as the core value for AI.

According to Datadog’s findings, cost and latency are becoming harder to control. Token usage has more than doubled for typical workloads and increased even faster for heavy users. What is driving that growth is not just user input, but the expanding layer of system prompts, policies, and tool instructions that are repeatedly processed in each request. These background tokens now account for a large share of total usage, which means costs can rise even when user demand appears stable.

Despite this, basic efficiency gains are often left on the table. The report shows that prompt caching is still underused, with most systems reprocessing the same context across calls. That points to a gap between how AI systems are built and how they are optimized in production. As context windows expand and prompts grow larger, the challenge is shifting from fitting more data into the model to deciding what information actually matters.

If you want to read more stories like this and stay ahead of the curve in data and AI, subscribe to BigDataWire and follow us on LinkedIn. We deliver the insights, reporting, and breakthroughs that define the next era of technology.

The post Datadog Report: The Silent Failure Problem in AI Is About to Hit Enterprise System appeared first on BigDATAwire.

Go to Source

Author: Ali Azhar