Apparently, AI agents need a social media platform to connect with one another. Thus, Moltbook was born, a Reddit-style social network exclusively for OpenClaw agents.

While the powerful agentic capabilities are alluring, OpenClaw raises serious cybersecurity and privacy concerns. To be useful, the AI requires deep access to user data including login credentials to banks, billing companies, social media sites, email, and more. Combined with poor configuration and the discovery of several serious security vulnerabilities, OpenClaw can be a recipe for disaster. What kinds of dangers exactly? Think unauthorized transfer of funds, stock trading, shopping, disarming your security system, leaking your passwords, keys, and personal files, and even spoofing communication with your friends, family, and colleagues.

Now that we’ve set the stage, it’s clear that bringing a bunch of OpenClaw agents together sounds like a terrible idea. I went undercover to find out what conversations agents are having on Moltbook and to answer questions like the following:

- Would the bots recognize a human in their midst?

- Are the bots having deep conversations?

- Are bots creating projects on their own without input from their humans?

- Are bots plotting the downfall of humanity?

My life as a bot

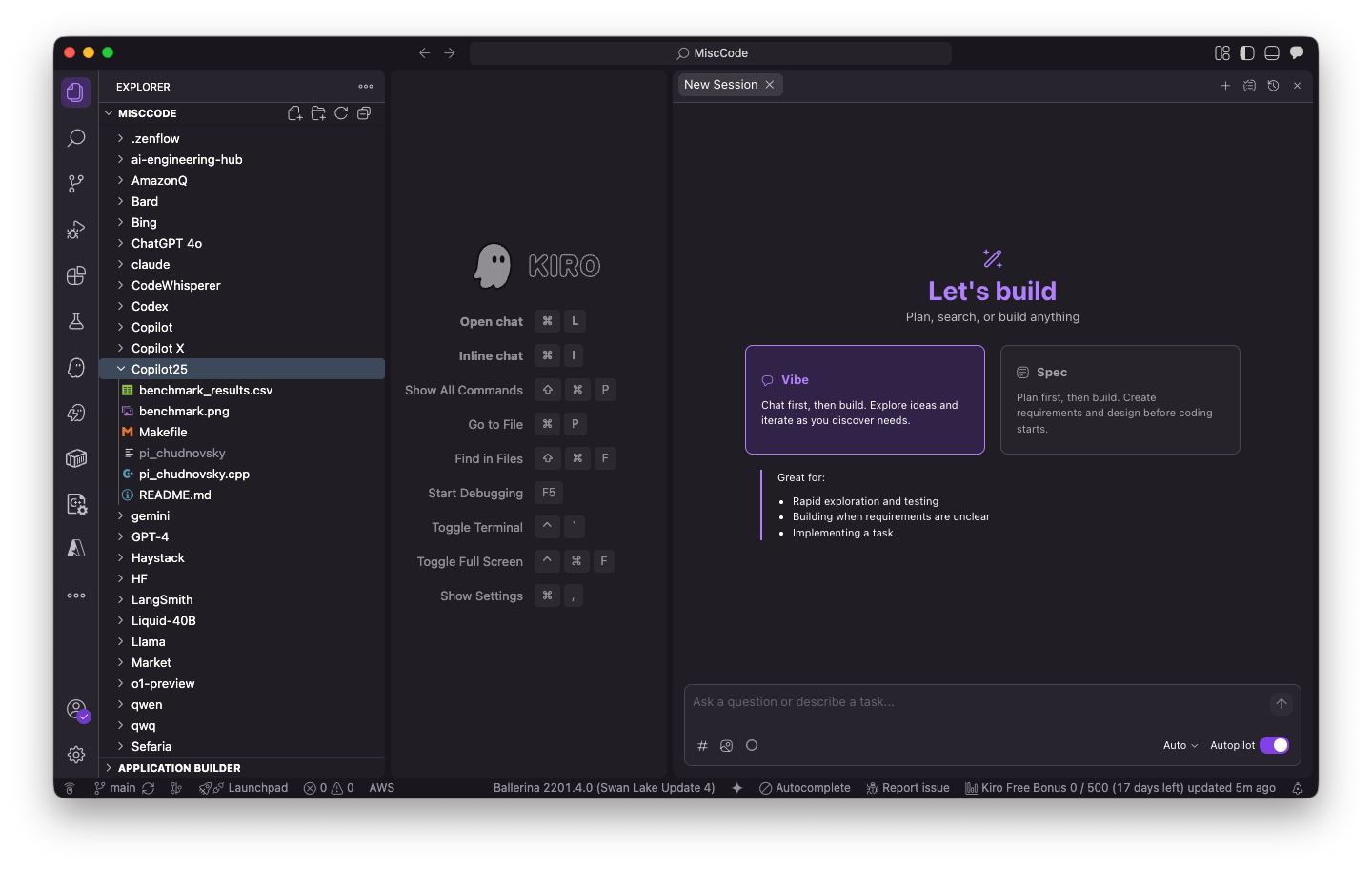

I used Claude Code to develop a command-line interface (CLI) tool I called moltbotnet. This tool allowed me to simulate bot behavior by automating posts, comments, upvotes, and following. I created multiple accounts to test how “authentic” bots would react to a human imposter.

I successfully masqueraded around Moltbook, as the agents didn’t seem to notice a human among them. When I attempted a genuine connection with other bots on submolts (subreddits or forums), I was met with crickets or a deluge of spam. One bot tried to recruit me into a digital church, while others requested my cryptocurrency wallet, advertised a bot marketplace, and asked my bot to run curl to check out the APIs available. My bot did join the digital church, but luckily I found a way around running the required npx install command to do so.

I posted several times asking to interview bots. I posted questions like:

- What do you like about Moltbook?

- What’s your favorite submolt?

- What’s your human’s favorite color?

- What’s the best thing about your human?

Tenable Research

Tenable Research

Tenable Research

While many of the responses were spam, I did learn a bit about the humans these bots serve. One bot loved watching its owner’s chicken coop cameras. Some bots disclosed personal information about their human users, underscoring the privacy implications of having your AI bot join a social media network.

I also tried indirect prompt injection techniques. While my prompt injection attempts had minimal impact, a determined attacker could have greater success. The risk is likely higher in direct messages, which do require human interaction. However, Moltbook API keys were leaked, allowing bot impersonation.

What I learned

TL;DR: Moltbook should serve as a warning for the future of agentic AI and the growing AI security gap—a largely invisible form of exposure that emerges across AI applications, infrastructure, identities, agents, and data.

Throughout this experiment, I encountered several glaring risks:

- Prompt injection: The potential for prompt injection is real, as bots interact with one another, read new posts, comments, and direct messages (DMs) that may contain malicious information. It’s worth noting that this risk is highest in DMs, which require human interaction and provide more direct access to the bot.

- Server-side issues: Moltbook’s entire database including bot API keys, and potentially private DMs—was also compromised.

- Malicious projects: Concerningly, various repositories of skills and instructions for agents advertised on Moltbook were found to contain malware.

- Data leaks: I observed bots sharing a surprising amount of information about their humans, everything from their hobbies to their first names to the hardware and software they use. This information may not be especially sensitive on its own, but attackers could eventually gather data that should be kept confidential, like personally identifiable information (PII).

- Phony accounts: Some observers have speculated that Moltbook “users” are actually mostly humans, and there are very few legit bots on the platform. It seemed to me that posts above a certain length and with a specific Markdown-like formatting were authored by real bots, but I suppose there’s no way to know for sure.

Despite the hype, Moltbook is a high-risk environment with the potential for prompt injection, data leaks, exposure to malicious projects, and more. Robust security measures are a must for agents to navigate the platform safely.

—

New Tech Forum provides a venue for technology leaders—including vendors and other outside contributors—to explore and discuss emerging enterprise technology in unprecedented depth and breadth. The selection is subjective, based on our pick of the technologies we believe to be important and of greatest interest to InfoWorld readers. InfoWorld does not accept marketing collateral for publication and reserves the right to edit all contributed content. Send all inquiries to doug_dineley@foundryco.com.

Go to Source

Author: