The rise of AI over the past two years or so has often been framed as a high stakes race. Bigger models, unbelievable company valuations, more compute and bigger datacenters. Every milestone reinforced the meteoric trajectory of AI’s growth. Could AI keep scaling without limit?

Many hyperscalers and governments aligned around that vision, committing to unprecedented capital to build the infrastructure needed to support it. It has been relatively smooth sailing, at least in terms of scaling, until the AI dreams now stare into a $7 trillion reality check.

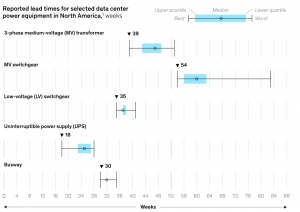

According to a report by McKinsey, the global AI spending will cross $7 trillion by 2030. That figure is not a forecast of what will be spent – it is the estimated cost of building the global AI infrastructure that is already being planned. Around 100 to 110 gigawatts of new data center capacity globally is under planning. At typical build costs that can reach tens of billions of dollars per gigawatt. That means that the total quickly scales toward the staggering $7 trillion number.

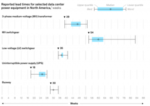

Lead times for critical equipment are growing, reaching more than 50 weeks in some cases. (Credits:Mckinsey)

Large commitments are already on the table, and they are not small or experimental. Microsoft is putting tens of billions into expanding AI infrastructure, much of it tied to OpenAI. Amazon keeps building out data center capacity as AI demand starts to reshape AWS from the inside.

Google is doing the same – pushing more capital into its global footprint to support Gemini and everything around it. On the hardware side, Nvidia takes center stage, with demand for its chips pulling even more spending upstream.

Governments are keen to invest in and have greater control of AI’s evolution. Saudi Arabia and the UAE are backing large scale AI infrastructure programs with sovereign capital. The US continues to channel funding into semiconductors and domestic data center capacity. You start to see the pattern pretty quickly. This is money that is already committed. And in many cases already being spent – long before the full cost of the system is even clear.

There is also the question about what exactly justifies the $7 trillion spend? A lot of conversation around that is about it being “inevitable”. AI will transform every industry. Demand will continue to rise. More compute will always translate into more value. But the early enterprise signals are more mixed.

Companies are still struggling to turn pilots into production systems. Data readiness remains a bottleneck, especially for unstructured data. Many AI workloads are still experimental, not mission critical. Even where returns exist, they are often uneven and difficult to measure.That creates a mismatch.

Infrastructure is being built on long term expectations of demand, while actual monetization is still catching up. Look at where the real usage actually is today. A few areas are pulling most of the weight. Coding assistants, customer support automation, maybe some internal productivity tools.

Beyond that, a lot of companies are still experimenting. Running pilots. Trying things that may or may not stick. That is a very uneven demand curve. You do not have every enterprise suddenly needing massive compute at the same time. You have bursts of demand in specific pockets, while the rest is still figuring itself out. That is important because infrastructure does not scale in pockets. It gets built upfront – typically at full capacity.

If adoption slows or consolidates around fewer use cases, parts of that infrastructure could sit underutilized. We have seen this pattern before in other technology cycles. The difference here is the scale.

AI was initially framed as software. Fast moving, scalable, and relatively asset light compared to traditional industries.That framing is no longer accurate. What is emerging now looks closer to a utility model. Large, centralized infrastructure. Massive upfront investment. Long payback periods. Heavy dependence on energy and physical assets.

This shifts who can realistically compete. Only a handful of companies can deploy capital at this scale. Even fewer can operate globally integrated systems across compute, data, and energy. That naturally concentrates power.

And then there is who actually makes money in this setup. If you are building apps on top, you are renting everything. The compute, the models, the storage. Your margins depend on someone else’s pricing.

Meanwhile, the companies that own the data centers, the chips, and the energy contracts are sitting in a very different position. They are not guessing where demand will go. They are getting paid either way, as long as usage shows up somewhere. Over time, that shifts where the real power sits. It is less about who builds the smartest application, and more about who owns the system everything runs on.

It also raises the stakes. When capital intensity rises, mistakes become expensive. Overbuilding is not a small correction. It becomes a multi billion dollar problem. Energy becomes the defining variable One of the most overlooked aspects of the $7 trillion figure is how much of it is effectively an energy story. AI infrastructure does not just consume electricity. It competes for it.

As data centers scale, they begin to influence regional energy markets. Prices can shift. Availability becomes constrained. New capacity has to be brought online, often through a mix of traditional and renewable sources. This creates a feedback loop. More AI demand requires more power. More power requires more infrastructure. That infrastructure then feeds back into the cost structure of AI itself.

At some point, energy is no longer a background input. It becomes a limiting factor and a strategic asset. From acceleration to negotiation, the past two years have been defined by acceleration. Faster models, larger clusters, more aggressive investment.

The next phase looks different. Scaling AI is no longer just about pushing forward. It is about negotiating constraints. Between compute and energy. Between capital and returns. Between national interests and global supply chains.

The question is no longer “how fast can AI grow.” It is “how far can this system scale before something pushes back.” And that is where the $7 trillion number really lands. Not as a projection, but as a signal that the easy phase of AI expansion may already be behind us.

The post AI Is Running Into a $7 Trillion Wall appeared first on BigDATAwire.

Go to Source

Author: Ali Azhar