At its recent Cloud Next event, Google unveiled advanced geospatial AI capabilities that extend how imagery is analyzed and integrated into enterprise workflows. However, that was only a starting point.

The tech giant has announced that in the coming weeks it will keep expanding its Imagery Insights portfolio with the launch of new Aerial and Satellite Insights – pushing imagery closer to something enterprises can actually use at scale.

With this update, the aim is to move beyond static visuals and have imagery turn into structured and machine-readable data that feeds directly into systems for analysis and decision making. What this means is that it’s no longer about what humans can see in an image, but what systems can infer from it.

With Aerial and Satellite Models, an energy analyst can type a prompt like “find large HVAC cooling towers”. The model identifies relevant cooling tower objects across large geographies. (Credits: Maps Platform Google)

The updates are primarily centered on how data is packaged and delivered. Instead of exposing imagery as a raw input, the new offerings provide processed outputs that can be used in real time. These outputs include detection of physical objects and classification of land and infrastructure features.

Most of the heavy lifting happens behind the scenes before the user even interacts with the data. That removes the need to start from pixels and build custom interpretation layers. The geospatial signals are available in a usable format from the start.

The main difference for users is how quickly they can move from data access to usable insight. Traditional approaches required assembling a pipeline that handled processes like ingestion, labeling, model training, and analysis. Each stage introduced complexity. With Aerial and Satellite Insights those stages are handled within the platform.

According to Google, users can access outputs directly and integrate them into analytics environments like BigQuery. Model operations, including scaling and execution, are managed through Vertex AI. This reduces the need for dedicated infrastructure and specialized expertise. The workflow becomes less about building systems and more about using the data that those systems produce.

Google has partnered with Airbus and Vexcel to expand Google Maps catalog of aerial and satellite imagery.

“Our expanded partnership with Google underscores Airbus’ commitment to delivering the highest quality and freshest data to our partners,” said Eric Even, Head of Space Digital at Airbus Defense and Space. “By adding Airbus’ industry-leading satellite imagery to Google’s Aerial and Satellite Insights, we enable enterprise customers to move beyond visualization toward true decision-making with deeper, data-driven insights about the world in near real-time.”

Vexcel is contributing its aerial imagery library, adding more coverage to the dataset. It is also working with Google to help turn that imagery into something usable. As Erik Jorgensen, Chairman and CEO of Vexcel, put it, the work is enabling teams to “analyze it, and generate knowledge at scale.”

Google is also pointing to more direct outcomes tied to these capabilities. The company notes that Aerial and Satellite Insights can be used to optimize wireless network planning by identifying signal obstructions, monitor vegetation encroachment near critical infrastructure, and recognize new opportunities for renewable energy deployment.

In addition, Google says the approach can support site selection and asset management by enabling more comprehensive analysis and automated inventory audits. By using historical imagery, teams can detect changes over time and begin to recognize patterns, rather than reacting to issues after they appear.

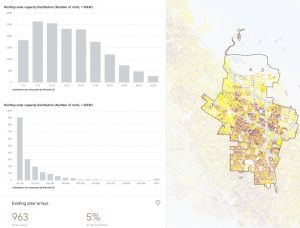

Solar dataset helps utilities and service providers identify high-value customers and optimize network planning. (Credits: Maps Platform Google)

The Milken Institute’s Community Infrastructure Center is using it to assess rooftop solar potential across community sites such as libraries, schools, and clinics, combining it with climate risk and demographic data to guide investment decisions.

“Solar Insights provides building and community-level clarity we’ve never had before,” shared Rachel Halfaker, Director, Milken Institute. “In minutes, our platform partners can evaluate rooftop solar potential for any community anchor facility and layer it with climate and socioeconomic data to pinpoint where resilience investments matter most. It’s already reshaping how the Milken Institute scopes clean energy projects with our community organizations.”

Google is also extending this into its platform. With Aerial and Satellite Insights available through Model Garden, users can query imagery using natural language, including zero-shot detection of objects without training custom models. At the same time, integration with Earth Engine allows imagery to be combined with proprietary datasets for deeper spatial analysis. This brings geospatial data closer to the rest of the enterprise stack, rather than keeping it as a separate workflow.

The broader goal is to reduce the need for field visits and speed up how decisions are made. As Google describes it, these tools are designed to help organizations “scale operations while reducing in-the-field presence, risk, and costs.” The focus is on turning imagery into something that feeds operational decisions, rather than something that needs to be manually reviewed.

If you want to read more stories like this and stay ahead of the curve in data and AI, subscribe to BigDataWire and follow us on LinkedIn. We deliver the insights, reporting, and breakthroughs that define the next era of technology.

The post From Images to Usable Data: Google’s Next Leap in Geospatial AI appeared first on BigDATAwire.

Go to Source

Author: Ali Azhar