Most large enterprises run on deterministic software foundations. Business rules are embedded within workflows, state transitions are modeled explicitly and escalation paths are defined in advance. System behavior is specified in advance, making outcomes predictable. Meaningful scenarios are encoded as conditional branches and validated before release. For decades, this approach has delivered the reliability and control required for mission-critical operations.

This model assumes most situations can be anticipated and expressed in logic. It works well when variation is limited and conditions remain manageable. If new requirements can be added as workflow branches, the structure holds. It begins to strain when processes must respond to context — not just thresholds, but the broader circumstances of a case.

In my experience, customer onboarding in banking makes this tension visible. Onboarding sits at the intersection of digital channels, fraud detection, regulatory obligations and revenue goals. It must satisfy Know Your Customer (KYC) and Anti-Money Laundering (AML) requirements while minimizing abandonment and resisting synthetic identity attacks.

During my involvement in digital account opening initiatives at a major North American bank, cross-functional design sessions repeatedly surfaced the same trade-off. Product teams pushed to reduce friction and improve conversion while fraud teams responded to bot-driven account creation and mule schemes with additional safeguards. Compliance insisted regulatory standards be met without exception and engineering absorbed each new requirement into the orchestration framework. Individually, these decisions were rational. Collectively, they made the workflow more complex.

The underlying challenge was not a shortage of rules but expressing contextual judgment within a static branching structure. Differentiation occurred only at predefined checkpoints and information was often collected in bulk rather than adapting to known facts. Collect too little and the institution risks regulatory exposure or fraud; collect too much and abandonment rises. Attempt to encode every variation as additional branches and the workflow becomes increasingly fragile.

Adaptive scoring and contextual models can complement deterministic logic. Rather than enumerating every scenario in advance, they help determine whether additional verification is warranted or whether progression can continue with existing evidence. Deterministic workflows still enforce regulatory requirements and final state transitions; the adaptive layer informs how the system navigates toward those outcomes.

Although onboarding illustrates the issue clearly, the same pattern appears in credit adjudication, claims processing and dispute management. As adaptive signals enter these workflows, the architectural question shifts from adding branches to deciding where contextual judgment should reside. In my view, what is missing is not another conditional path but a different runtime model — one that interprets context and determines the next appropriate action within defined limits. This architectural layer, which I refer to as the Agent Tier, separates contextual reasoning from deterministic execution.

Introducing the agent tier: Separating execution from contextual judgment

In many enterprises, orchestration logic does not reside in a formal workflow platform. It is embedded in SPA applications, implemented in APIs, supported by rule engines and coordinated through service calls across systems. User journeys are assembled through API calls in predefined sequences, with eligibility or routing conditions evaluated at specific checkpoints.

This approach works well for repeatable, well-understood paths. When inputs are complete, risk signals are low and no exception handling is required, the clean path can be executed deterministically. State transitions are known in advance. Service calls follow predictable patterns. Human tasks are invoked at predefined points.

The difficulty arises when the workflow encounters ambiguity. Inputs may be incomplete. Signals may require interpretation rather than simple threshold comparison. Multiple systems may need to be coordinated in a sequence not explicitly modeled. Attempting to encode every such situation into SPA logic or orchestration APIs leads to increasingly complex condition trees and harder-to-maintain code. Instead of expanding hard-coded branching indefinitely, the runtime separates into two complementary lanes: Repeatable execution and contextual reasoning.

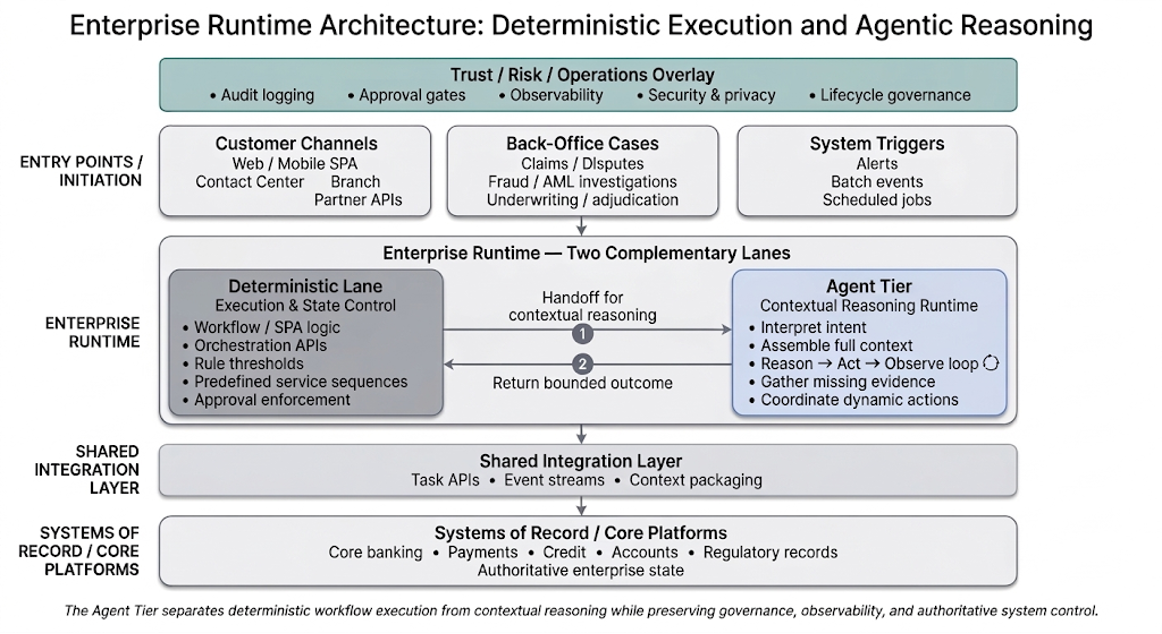

Conceptually, the enterprise runtime evolves into a two-lane structure, illustrated below.

Nitesh Varma

The deterministic lane retains control over authoritative state changes and rule enforcement. It manages eligibility checks, applies regulatory criteria, invokes known service sequences and finalizes cases in core systems. It continues to handle most predictable scenarios.

The runtime invokes the Agent Tier when contextual judgment is required. This may occur when additional evidence must be gathered before a rule can be evaluated, when multiple signals must be interpreted together rather than independently or when coordination across systems cannot be expressed through a fixed sequence. It evaluates available actions and returns a bounded recommendation that allows deterministic execution to resume.

The movement between lanes is explicit. The deterministic workflow hands off when it reaches a point where static branching is insufficient. The Agent Tier performs synthesis or dynamic coordination. Once the Agent Tier produces a structured result, such as a completed evidence bundle, a validated set of inputs or a recommended next step, control returns to the deterministic lane for controlled progression and final state transition.

This separation allows incremental adoption. Existing SPA logic and orchestration APIs remain intact; ambiguity points can be redirected to the Agent Tier without destabilizing deterministic execution.

What happens inside the agent tier

The Agent Tier is not a single “AI decision.” It is a structured reasoning cycle that combines interpretation with controlled action.

When the deterministic workflow hands off a case, the Agent Tier interprets the current situation by assembling available context — user inputs, existing customer relationships, fraud signals, journey state and relevant policy constraints. Based on that composite view, it selects the next action from an approved set of enterprise capabilities. That action might involve retrieving additional information, invoking a verification service, requesting clarification from the user or coordinating multiple systems in sequence. Once the action completes, the result is evaluated and the cycle continues until deterministic execution can resume.

This alternating pattern of reasoning and action is common in agentic system design. In technical literature, it is often referred to as the ReAct (Reason and Act) pattern, which interleaves reasoning steps with structured action selection. Rather than attempting to reach a final answer in a single pass, the system gathers evidence, reassesses its position and proceeds incrementally. In enterprise settings, this pattern becomes a disciplined way to manage contextual interpretation.

Reasoning in the Agent Tier does not involve free-form system access. It proceeds through approved operations exposed via governed interfaces. In practice, these tools are enterprise primitives such as:

- APIs that retrieve or update enterprise data

- event triggers that initiate downstream processing

- workflow actions that advance a case

- controlled service calls into core or third-party systems

Each operation is defined by explicit input/output contracts and permission boundaries and carries metadata describing its purpose and constraints. The runtime selects from this governed catalog — a mechanism commonly referred to as tool calling. Some frameworks further group related tools into higher-level capabilities known as skills, reusable functions for objectives such as identity verification or KYC evidence assembly.

Before control returns to the deterministic lane, the agentic runtime can also perform a structured self-check. It can verify that required conditions are satisfied, confirm alignment with policy constraints and ensure that any necessary approvals have been identified. In technical discussions, this is often described as reflection.

Taken together, these patterns do not introduce unchecked autonomy. They provide a structured way to manage contextual synthesis and dynamic coordination without allowing adaptive logic to diffuse across SPA code and orchestration services. Deterministic systems continue to enforce authoritative state transitions. The Agent Tier prepares the conditions under which those transitions occur.

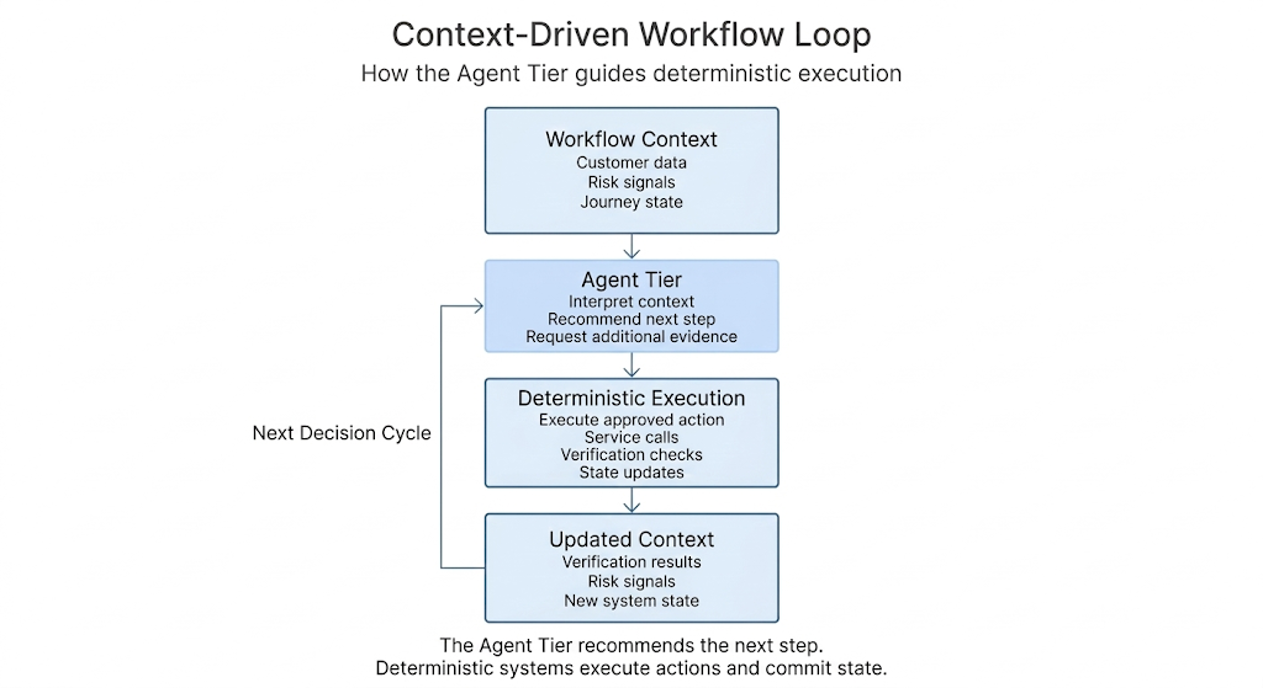

In many implementations, the Agent Tier does not directly control the workflow. Instead, it recommends the next step based on the available context. The deterministic tier remains responsible for execution. After each step is completed — retrieving evidence, invoking a verification service or preparing a review case — the updated context is returned to the Agent Tier, which evaluates the new state and recommends the next action. In this model, contextual reasoning informs progression while deterministic systems continue to enforce authoritative state transitions.

Returning to the onboarding example, the Agent Tier changes how the journey adapts to each applicant. The deterministic tier still executes core steps such as creating the customer profile, enforcing regulatory checks and committing account state in core systems. The Agent Tier evaluates the evolving context — customer relationships, fraud signals, identity verification results and available documentation — and recommends whether the workflow can proceed along the clean path, trigger additional verification or escalate to manual review. The result is not a new onboarding process but a workflow that adapts its progression dynamically while preserving the deterministic controls required for regulated operations.

Conceptually, the interaction between contextual reasoning and deterministic execution can be understood as a simple runtime loop, as illustrated below.

Nitesh Varma

The workflow progresses through a continuous loop in which contextual reasoning recommends the next step, deterministic systems execute it and the resulting context feeds back into the next recommendation.

Governing adaptive systems without losing control

Separating contextual reasoning from deterministic execution clarifies responsibility but does not eliminate risk. In regulated environments, adaptive sequencing must operate within explicit governance boundaries.

The trust and operations overlay represents cross-cutting controls across the runtime: Audit logging, approval gates, observability, security enforcement and lifecycle management. Within this structure, authoritative state transitions remain deterministic. Core systems continue to create client profiles, enforce limits, record disclosures and apply regulatory thresholds. The Agent Tier may influence progression, but final state changes occur only through controlled interfaces.

This containment boundary preserves explainability. When progression changes — for example, when additional verification is triggered or escalation occurs — institutions must be able to reconstruct why. Which signals were assembled? Which tools were invoked? What reasoning produced the recommendation? Concentrating contextual evaluation within a defined runtime layer makes that traceability possible.

Operational experience reinforces the need for these guardrails. Engineering discussions of production agent systems emphasize constrained tool access, explicit action catalogs, bounded iteration and strong observability. In enterprise environments, contextual reasoning must likewise operate through governed tools and visible control points.

Approval gates remain part of this structure. High-risk actions such as credit issuance, account restrictions, large payments or regulatory filings may still require human authorization regardless of how the progression was determined. Reflection inside the Agent Tier can validate readiness, but authorization remains explicit.

Lifecycle discipline is equally important. Changes to models, identity providers, tool contracts or orchestration logic can alter workflow behavior. The Agent Tier should therefore operate as a governed platform capability with versioned reasoning logic, controlled tool catalogs and defined testing and rollback mechanisms.

The objective is not to eliminate probabilistic reasoning but to contain it within observable workflows and governed boundaries. As adaptive capabilities expand, the architectural question is not whether contextual reasoning will exist, but whether it is diffused across the stack or concentrated within a controlled runtime layer.

Architectural leadership in an adaptive era

Introducing an Agent Tier adds a new runtime component, but enterprise complexity is not new; it is already dispersed across channel code, orchestration services, rule engines and proliferating conditional branches. The architectural question is not whether complexity exists, but where it resides. As fraud models evolve, verification technologies improve and regulatory expectations shift, adaptive capabilities will continue to expand.

I believe architecture must evolve from enumerating state transitions to defining containment boundaries. Deterministic systems enforce regulatory and operational requirements and remain responsible for authoritative state changes. Adaptive reasoning operates within explicit policy constraints and informs how workflows progress toward those outcomes. Instead of encoding every possible path in advance, enterprises can move toward context-driven workflows in which deterministic execution handles authoritative actions while the Agent Tier determines the next appropriate step based on evolving context.

This evolution does not require wholesale reinvention. It can begin with a single high-impact workflow where contextual variability is already evident. By introducing a disciplined runtime layer that mediates uncertainty while preserving deterministic control, organizations can modernize incrementally. In that sense, the Agent Tier is not simply a new feature; it is a structural response to a changing runtime reality, one that allows adaptive systems to operate within clear architectural and governance boundaries.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Go to Source

Author: